If someone has created a deepfake using your face, your voice, or your likeness, you now have a legal right to get it removed within 3 hours.

India’s February 2026 amendment to the IT Rules requires social media platforms and intermediaries to remove reported deepfake content within a 3-hour window from the time a valid complaint is filed. This is one of the strictest deepfake removal timelines in the world.

But here’s the problem: most victims don’t know this rule exists. And even those who do don’t know the exact process to invoke it. The news articles covering this rule explain that it exists. They don’t tell you how to actually use it step by step.

This guide is the victim’s playbook. It covers every step from identifying the deepfake to filing the complaint to escalating through legal channels if the platform doesn’t comply.

What the 3-Hour Rule Actually Says

In February 2026, the Ministry of Electronics and Information Technology (MeitY) issued an amendment to the Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021. The amendment specifically addresses AI-generated and manipulated media content.

Here’s what it requires:

Mandatory 3-hour removal window. When a victim (or their authorized representative) reports AI-generated or digitally manipulated content that depicts them without consent, the intermediary (social media platform, messaging service, hosting provider) must remove the content within 3 hours of receiving a valid complaint.

Applies to all significant social media intermediaries. This covers platforms like Instagram, Facebook, X (Twitter), YouTube, Telegram, WhatsApp (for broadcast/group content), Snapchat, and any platform with more than 5 million registered users in India.

Covers all types of deepfakes. Video deepfakes, audio deepfakes, face-swapped images, and AI-generated synthetic media that depicts a real person without their consent.

The victim doesn’t need to prove it’s fake first. The complaint triggers the removal obligation. The platform must remove first and evaluate later. If the platform determines the content isn’t actually a deepfake, they can restore it, but the default response to a valid complaint is removal.

Penalties for non-compliance. Platforms that fail to comply risk losing their intermediary safe harbor protection under Section 79 of the IT Act. This means they become directly liable for the content, which is a serious legal consequence that platforms take very seriously.

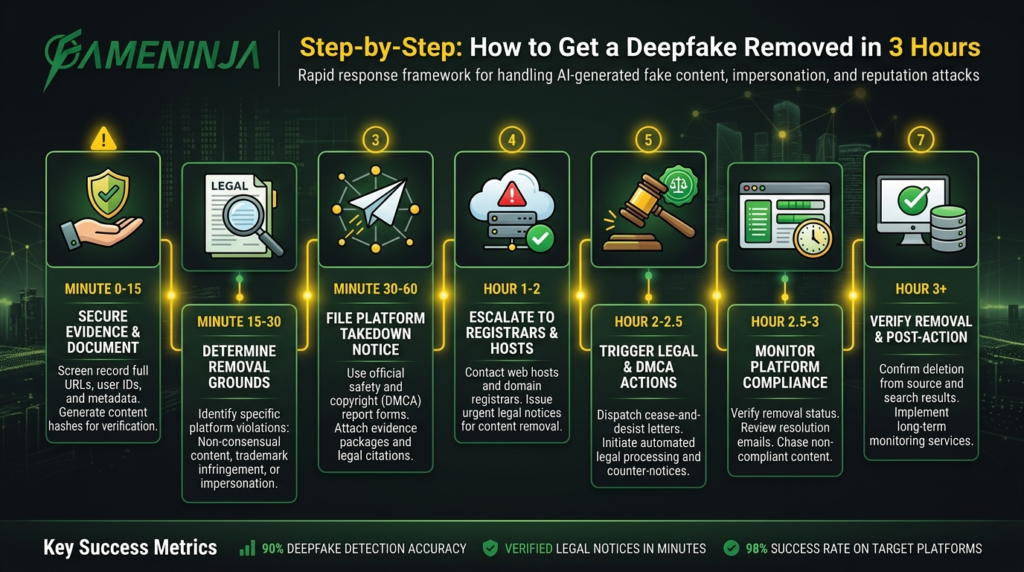

Step-by-Step: How to Get a Deepfake Removed in 3 Hours

Step 1: Document Everything (Before Filing)

Before you file any complaint, document the deepfake content thoroughly. This documentation serves two purposes: it provides evidence for your complaint, and it preserves evidence in case you need to pursue legal action later.

What to document:

Take screenshots of the deepfake content. Make sure the screenshots show the URL, the posting account, the date/time, and the content itself. If it’s a video, record the screen as you play the video.

Save the exact URL of the deepfake content. Copy it from the browser address bar, not from a shortened link. If the content is on multiple URLs, document each one.

Note the platform and account that posted it. Take a screenshot of the posting account’s profile page, including their username, display name, and bio.

Record the time you first discovered the content. This timestamp matters for any legal proceedings.

If possible, download the deepfake content to your device. On most platforms, you can use screen recording. This preserves the evidence even if the content is later deleted.

Save the original version of yourself (the unmanipulated photo, video, or audio) that was used to create the deepfake. This helps establish that the content is indeed a deepfake.

Step 2: File a Complaint With the Platform’s Grievance Officer

Every significant social media intermediary operating in India is required to appoint a Grievance Officer and a Chief Compliance Officer under the IT Rules 2021. Your complaint should go to the Grievance Officer.

Where to file on major platforms:

Instagram/Facebook (Meta): Go to the specific post, tap the three dots, select “Report,” then choose “It’s a manipulated photo/video of me.” You can also email Meta’s India Grievance Officer directly. Meta’s India grievance redressal mechanism is at [email protected] (for Facebook) and [email protected] (for Instagram).

X (Twitter): Report the post through the three-dot menu, selecting “Abusive or harmful” and then “Manipulated media.” You can also file a detailed report at help.twitter.com/forms/abusiveuser.

YouTube: Click the three dots below the video, select “Report,” then choose “Infringes my rights” and “Invades my privacy.” You can also use the YouTube Legal Removals page.

Telegram: Use the in-app report function. For faster action, email [email protected] with the channel/group link and content details.

WhatsApp: For broadcast lists and groups, report through the app. For persistent issues, email [email protected].

Your complaint must include:

- Your full legal name and contact information

- A clear statement that the content is AI-generated or digitally manipulated media depicting you without your consent

- The exact URL(s) of the content

- A reference to the IT (Intermediary Guidelines) Rules 2021, as amended in February 2026, specifically the deepfake removal provisions

- A request for removal within the 3-hour compliance window

- Screenshots and documentation from Step 1

Critical detail: Explicitly state in your complaint that you are invoking the 3-hour removal provision. Don’t just file a generic content report. Reference the specific rule. This ensures your complaint is routed through the expedited deepfake removal process, not the standard content review queue.

Step 3: File a Parallel Complaint on cybercrime.gov.in

While the platform processes your removal request, simultaneously file a complaint on the National Cyber Crime Reporting Portal.

Go to: cybercrime.gov.in

Select: “Report Other Cyber Crime” (or “Report Cyber Crime Related to Women/Children” if the deepfake is sexual in nature)

Category: Select “Social Media Related Crime” and sub-category “Profile Hacking / Identity Theft” or “Data Theft / Online Fraud” depending on the nature of the deepfake

Fill in all details: Platform name, URLs, your documentation, and a description of the deepfake content.

Save the complaint number. You’ll receive an acknowledgment with a complaint ID. Keep this safe. You’ll need it for escalation.

Why file here? The cybercrime complaint creates an official government record. If the platform doesn’t comply with the 3-hour rule, this complaint becomes evidence in any legal proceeding. It also triggers police investigation if the deepfake constitutes a criminal offense (which it usually does under multiple sections of Indian law).

Step 4: Send a Legal Notice (If 3 Hours Pass Without Removal)

If the platform has not removed the content within 3 hours of your complaint, you have grounds for legal escalation. A legal notice sent by a lawyer carries significant weight and often triggers immediate action.

The legal notice should reference:

Section 79 of the IT Act 2000 (intermediary safe harbor, which the platform loses if it fails to comply with the Rules)

The IT (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021, as amended in February 2026

Section 66E of the IT Act (violation of privacy)

Section 67 and 67A of the IT Act (publishing obscene material/sexually explicit material in electronic form, if applicable)

Sections 354C and 354D of the IPC / corresponding BNS 2023 sections (voyeurism, stalking, if applicable)

The Digital Personal Data Protection (DPDP) Act, 2023 (processing personal data without consent)

Send the notice to: The platform’s Grievance Officer, the Nodal Contact Person (required under IT Rules), and the platform’s India legal team (addresses available on their transparency reports).

Step 5: Escalate to the Appellate Committee (If Still Not Resolved)

Under the IT Rules 2021, if you’re not satisfied with the Grievance Officer’s response (or lack of response), you can escalate to the Grievance Appellate Committee established by MeitY.

File your appeal at: gac.gov.in

The Appellate Committee must dispose of the appeal within 30 days. Their order is binding on the intermediary.

Step 6: Court Orders (For Persistent Cases)

If all administrative remedies fail, or if you need emergency relief, you can approach the High Court for:

Interim injunction (John Doe order): This is particularly effective because it covers all platforms at once. The Delhi High Court has been granting these orders in deepfake cases, most recently in the Bhuvan Bam personality rights case (April 2026). A John Doe order requires all platforms and internet service providers to remove the specified content, not just the one platform you initially reported to.

Damages and compensation: If the deepfake has caused you financial or emotional harm, you can claim compensation from the creator and potentially from platforms that failed to comply with the removal timeline.

Criminal prosecution: If the identity of the deepfake creator is known, criminal prosecution under the IT Act and BNS 2023 is possible. The cybercrime complaint from Step 3 can be the basis for this prosecution.

Legal Weapons Available to Deepfake Victims in India

India doesn’t have a single “deepfake law” (the proposed Deepfake Prevention and Criminalisation Bill, 2023 is still pending). But victims have multiple legal provisions across different statutes that collectively provide strong protection:

IT Act 2000

Section 66E: Punishes intentional capture, publication, or transmission of private images. Penalty: up to 3 years imprisonment and up to Rs. 2 lakhs fine.

Section 67: Publishing or transmitting obscene material in electronic form. First conviction: up to 3 years and up to Rs. 5 lakhs fine. Subsequent: up to 5 years and up to Rs. 10 lakhs.

Section 67A: Publishing sexually explicit material. First conviction: up to 5 years and up to Rs. 10 lakhs. Subsequent: up to 7 years and up to Rs. 10 lakhs.

Section 67B: If the deepfake involves a minor. Up to 5 years and up to Rs. 10 lakhs (first), up to 7 years and up to Rs. 10 lakhs (subsequent).

Bharatiya Nyaya Sanhita (BNS) 2023

The BNS replaced the Indian Penal Code in 2024. Relevant sections include:

Section 79 (Defamation): If the deepfake defames you. Up to 2 years simple imprisonment and/or fine.

Section 78 (Criminal intimidation): If the deepfake is used as a threat.

Section 77 (Voyeurism, replacing IPC 354C): If the deepfake involves intimate imagery. Up to 3 years (first offense), up to 7 years (repeat).

Section 351 (Criminal force/assault, replacing relevant IPC sections): In some cases, deepfakes can constitute digital assault.

DPDP Act 2023

The Digital Personal Data Protection Act provides additional remedies:

Your image, voice, and likeness are personal data. Creating a deepfake using your personal data without consent violates DPDP. You can file complaints with the Data Protection Board once it’s fully operational.

IT (Intermediary Guidelines) Rules 2021 (Amended Feb 2026)

As discussed, platforms must remove deepfakes within 3 hours. Failure to comply results in loss of safe harbor protection under Section 79 of the IT Act.

What If the Deepfake Has Already Spread Widely?

Sometimes victims discover deepfakes after they’ve already been shared across multiple platforms, messaging apps, and websites. Here’s the escalation protocol:

Multi-Platform Removal

File complaints on every platform simultaneously. Don’t wait for one platform to respond before filing on the next. The 3-hour clock starts separately on each platform from when you file the complaint on that specific platform.

Search Engine Deindexing

Even after the deepfake is removed from the hosting platform, it may still appear in Google search results (cached pages, thumbnails, etc.). File a Google removal request for the cached version. Google has specific processes for removing non-consensual intimate images and manipulated media from search results.

Messaging App Spread

If the deepfake has spread through WhatsApp or Telegram groups, the platform-level removal won’t catch copies that have been downloaded and re-shared. In these cases:

File cybercrime complaints against identifiable sharers Contact WhatsApp/Telegram about specific groups distributing the content Consider a court order directing telecom operators to block access to specific URLs

Image and Video Reverse Search

Use Google’s reverse image search and tools like TinEye to find copies of the deepfake on websites you might not know about. For each instance you find, file a separate removal request with that platform and document it for your legal case.

For detailed guidance on removing images from Google results, see our guide on removing negative images from Google.

Protecting Yourself Proactively

While this guide focuses on removing deepfakes after they appear, prevention is better than cure. Here are proactive steps:

Limit high-resolution photos and videos on public profiles. Deepfake AI models need source material. The fewer high-quality images and videos of you that are publicly available, the harder it is to create convincing deepfakes.

Watermark your content. While not foolproof, watermarks make it harder to use your content for deepfakes without detection.

Set social media profiles to private where possible. On Instagram, switch to a private account if you don’t need public visibility.

Register for content authentication services. Some platforms are rolling out Content Credentials (C2PA standard) that digitally sign authentic content. While adoption is still early, this is the direction the industry is moving.

Monitor your online presence regularly. Use Google Alerts for your name and set up reverse image search monitoring. The sooner you discover a deepfake, the less time it has to spread. ORM monitoring tools can help automate this.

Build a strong personal brand. A well-established online presence with verified accounts, professional profiles, and positive content makes it easier to debunk deepfakes. When people can compare the deepfake against your verified, authentic content, the fake is easier to identify. See our guide on personal branding creation for more.

How FameNinja Handles Deepfake Removal Cases

At FameNinja, deepfake removal has become one of our fastest-growing service areas since the February 2026 rule change. Here’s how we approach these cases:

We file simultaneously on all platforms. When a client comes to us with a deepfake, we don’t file one complaint at a time. We document the content, prepare identical complaints for every platform where it appears, and file them all within 30 minutes. This maximizes the chance of complete removal within the 3-hour window.

We always file a parallel cybercrime complaint. Even if the client only wants the content removed (not criminal prosecution), the cybercrime complaint creates additional pressure. Platforms take complaints more seriously when they know a government complaint is also filed.

We prepare legal notices in advance. If the platform doesn’t comply within 3 hours, we have a templated legal notice ready to send immediately. The combination of an expired 3-hour deadline plus a cybercrime complaint plus a legal notice almost always results in rapid removal.

We handle the emotional component with care. Deepfake victims, especially those targeted with intimate or sexual deepfakes, are going through an extremely distressing experience. Our team is trained to handle these cases with sensitivity and urgency. Privacy is paramount, and we minimize the number of people who see the content during the removal process.

For broader context on how deepfake removal fits into personal online reputation management, including long-term recovery strategies after a deepfake incident, see our detailed guide.

What Happens After the Deepfake Is Removed

Removal is the first step, not the last. Here’s what you should do after the immediate crisis:

Preserve all evidence. Keep every screenshot, complaint number, response from platforms, and legal notice. You may need these for criminal prosecution or civil damages claims later.

Monitor for re-uploads. Deepfakes can be re-posted. Set up monitoring to catch any re-uploads quickly. The 3-hour rule applies to re-uploads as well, so you can file new complaints for each instance.

Consider pursuing criminal charges. If the creator is identifiable, filing an FIR (First Information Report) starts the criminal prosecution process. The cybercrime complaint you filed in Step 3 can be converted to an FIR.

Seek damages if appropriate. If the deepfake caused you financial harm (lost business, lost job opportunities) or severe emotional distress, you can file a civil suit for damages against the creator and potentially against non-compliant platforms.

Address search engine results. Even after removal, search engines may have cached the content. File deindexing requests with Google to remove cached versions and thumbnails from search results.

Take care of your mental health. Being targeted by a deepfake, especially an intimate or sexual one, is a form of digital violence. Don’t hesitate to seek emotional support from trusted people or professionals.

FAQ

How long do platforms have to remove deepfakes under Indian law?

Under the IT (Intermediary Guidelines) Rules 2021, as amended in February 2026, significant social media intermediaries must remove AI-generated or digitally manipulated content depicting a person without their consent within 3 hours of receiving a valid complaint from the victim or their authorized representative.

What happens if a platform doesn’t remove the deepfake within 3 hours?

If the platform fails to comply with the 3-hour removal window, it risks losing its intermediary safe harbor protection under Section 79 of the IT Act 2000. This means the platform becomes directly liable for the content. Practically, this is a severe consequence that platforms actively try to avoid. You can escalate to the Grievance Appellate Committee at gac.gov.in or pursue a court order.

Is creating a deepfake illegal in India?

Yes, though there’s no single “deepfake law.” Creating deepfakes violates multiple provisions: Section 66E IT Act (privacy violation), Section 67/67A IT Act (obscene/sexually explicit material), relevant BNS 2023 sections on defamation and voyeurism, and the DPDP Act 2023 (processing personal data without consent). Penalties range from 2-7 years imprisonment depending on the nature of the deepfake.

Do I need a lawyer to file a deepfake removal complaint?

No, you can file platform complaints and cybercrime.gov.in complaints yourself without a lawyer. However, if the platform doesn’t comply within 3 hours, or if you want to pursue legal action (court orders, FIR, civil damages), a lawyer experienced in cyber law and IT Act cases is strongly recommended.

Can I get a deepfake removed from WhatsApp groups?

WhatsApp messages are end-to-end encrypted, which limits WhatsApp’s ability to proactively scan content. However, you can report specific messages and groups to WhatsApp. For persistent distribution through WhatsApp groups, a court order directing WhatsApp to block specific groups or content is the most effective route. You can also file cybercrime complaints against identifiable group administrators.

What if I don’t know who created the deepfake?

You can still file removal complaints with platforms and cybercrime.gov.in. The police can investigate and attempt to identify the creator through digital forensics, IP tracking, and platform cooperation. A court order (John Doe order) can direct platforms to preserve data that helps identify the creator. You don’t need to know the creator’s identity to get the content removed.

How do I prove that a video is a deepfake?

For the 3-hour removal complaint, you don’t need to prove it’s a deepfake. The platform must remove first and evaluate later. For legal proceedings, expert forensic analysis can identify deepfakes through artifacts in facial mapping, inconsistent lighting, audio mismatches, and metadata analysis. Several Indian forensic labs now offer deepfake detection services.

Does the 3-hour rule apply to websites, or only social media platforms?

The rule primarily applies to “significant social media intermediaries” (platforms with 5+ million registered users in India). However, other intermediaries (smaller platforms, hosting providers, websites) are also required to remove such content after receiving complaints, though with a standard 72-hour timeline rather than 3 hours. For websites hosted outside India, the legal process is more complex and may require court orders.